1 min read

No professional translator can be excused from learning how to use new tools and picking up new techniques for providing better translations. For this reason, our in-house project management and translation team would like to offer some tips for translators to bear in mind when embarking on a translation project - while they translate, after they finish their translation work and before sending it to a client.

For many translation agencies or translation companies (better known as language service providers), the translation process involves several stages that freelance translators are often not aware of. We find that translators who have spent some time as trainees at our translation company and have familiarized themselves with all the processes required tend to have a more serious and professional approach than those who have landed in the profession via other means and just learned by trial and error from their home offices.

There is much more to translation than simply typing in a foreign language and using one or two CAT or translation memory tools. A professional translation service typically requires both a revision (or editing) and a proofreading. These are two essential stages that need to take place before we can say that a document is ready to be delivered to the client.

Translation Standard ISO 17100 states that a professional service must carry out each stage independently. This means that the translator cannot be the person who does the final checks (the editor) and the final proofreader must also be a different person to the editor and translator. Often, this is not practical due to time constraints and translators end up proofreading their own work after receiving the editor’s comments.

Neural Machine Translation is beginning to change this traditional TEP scenario as neural translation is of such high quality (near-human) that a monolingual proofreading for style plus the necessary checks for terminology and numbering accuracy are quite enough for many clients that want "knowledge extraction" or a more affordable translation service.

Translation Quality Control versus QA

There seems to be confusion in the translation industry between the terms QA and QC, which only happens among linguists. QA stands for Quality Assurance and that means "the policies in place to ensure a quality philosophy" or, in other words "providing a guarantee that quality requirements will be fulfilled." It does not mean running a software check. Quality Control means the steps taken in order to ensure that the product meets the user's expectations. Quality Control measures are running a spellcheck, verifying the number of paragraphs, numbers, etc., and of course reading the final text before delivery to the next person in the supply chain.

The Quality control stage has to take place at the source. The Japanese industry was a master of the "first-time-right" philosophy because each person, from the beginning, has to ensure that the work he or she is providing is faultless. But how can you do this if you are a just a freelancer?

If you are a freelance translator, you should incorporate a quality control stage into the process before delivering your translation, and you should never send a job to your client without having checked it and having read it beforehand.

It is sometimes hard to ask colleagues to invest their precious time in reading your work or checking your terminology. After all, they are busy translating, too. But no translator should really work independently. Times have changed since the advent of translation memories and related tools that make our work more precise. Nowadays, translators have an abundance of information at their disposal on the internet, available in just a few clicks. Checking your work before delivering and using tools such as XBench or QA Distiller for large jobs is a must when handling many files and having to keep consistency across all of them.

The point is that when clients and translators talk about “translation,” they are referring to the whole process: translation is the first step in a process which is generally also known as TEP (Translation-Editing-Proofreading). Pangeanic places a lot of importance on quality at the source supply, and thus delivering a quality translation from the start is essential for the other steps to run smoothly. We have put together 12 tips for translators to help professional translators deliver high-quality translation services and thus reduce inefficient and time-consuming steps and queries:

Tips for Translators

- Make sure you review the document(s) before starting a translation. Read all the instructions that come with the job: they show you the way in which the translation must be approached. You wouldn't call a plumber to repair a leak and leave your house without explaining where and what the problem is. Ensure that you have received all the correct files and documents.

- Make sure that you are comfortable with the subject matter and language style, and confirm this with the Project Manager. Whilst you may take on translations in fields in which you are not an expert for the sake of expanding your business, it will be more difficult for you to master the terminology, and you will have to invest time in doing so. There is nothing wrong with it, but be aware that your own quality checking and revision become even more important. Sadly, there may be some subjects for which you are simply not qualified or that you are not good at. It is OK. Professional translators specialize in a few subjects and, in time, they become so good at them that they hardly take on anything outside their sphere of expertise.

- Make sure you are familiar with the file format. If you are working for a translation company, the files should be sent in a translation-friendly format and with a translation memory. Do not change the CAT tool your client has specified. There is no worse feeling for Translation Project Managers than receiving a file that they have to restructure because of bad formatting. You may have saved some money using a tool that promises full compatibility with this and that format, but if you have not tried it yourself and the original is heavily formatted, you end up wasting the Project Manager’s precious time and ruining a good relationship. They will have to reconstruct the whole file and no matter how good your translation was, wasted time can never be recovered. You risk losing a client.

- Use all reference material, style guides, glossaries and terminology databases. Never ignore a glossary that has been sent to you. If the client has created a database, use it. If it is a simple Excel file, you know all tools can import this format and create a glossary file in seconds. It is essential that you are consistent with the terminology and style of previous jobs. Quite often, you will not be the first translator involved in a publication process. One-time translation buyers are few and far between, and if you want to succeed in business as a translator, you want regular, paying clients and a regular income. It may be the first time you are translating a particular piece or set of files. It may be the first time you are translating for a particular client, but they are sure to have bought translation services before, and they expect consistency in style and terminology.

- Contact your Translation Project Manager immediately if you find any problems with the translation memory or the glossary. Previous translators may not have followed it, or perhaps they had a bad day. If there are any quality issues with the material you have been provided, and you do not know whether to follow the translation memory or the glossary, contact the Translation Project Manager and let them know there is a problem with the source. If this is not possible because of time constraints, follow what has been done before, even if your personal style and personal preferences are different. Take note in a separate file of any terminology issues and comments while you are working. You will not feel like doing that or going over the errors once you have finished the translation. Let the Translation Project Manager know what has happened. Remember, feedback is always appreciated, and it helps to build on quality and improvements in the process. You will score many points in your Translation Project Manager’s eyes, and you will build a reputation for yourself as a serious, quality-conscientious translator.

- Contact your Translation Project Manager or client immediately if you encounter or foresee any problems with the document, format, word count or delivery time.

- Identify relevant reference sources on the Internet for the subject you are going to translate. If you are going to translate technical documentation for bicycles, find the brand’s website in your language. The manufacturer’s competitors are often a source of good terminology and style. If you are translating medical devices, you are sure to find some relevant material on related websites. Have all this ready before you begin to translate. It is called “background work.” And it pays off, in the short and long term. It is like doing a reference check. Would you accept work from a client who you know nothing about? Would you meet someone in real life that you know nothing about without delving a little bit into who they really are? Don’t companies do a reference check on freelance translators and staff that they want to employ? So, have other online resources specific to the topic you are translating at hand for easy reference. And, more importantly, become a researcher of the topics you specialize in as a freelancer. Prepare yourself for those days without internet when you have no connection to the online sources of information but you still have work to deliver.

- When you have finished your translation, run your spellchecker and correct any misspellings and typos. Now is the time to become your own editor and read over the document, comparing it to the original. Read again without looking at the source text to make sure that it makes sense. Readers will not have access to your source material and, frankly, they do not care that the text was translated or how it was translated. They want to read in their native language and you, the translator, are the link that allows them to do so. Your version has to read as if it had originally been written in your language, free of literal translations and cumbersome expressions that are directly transferred, and without any errors.

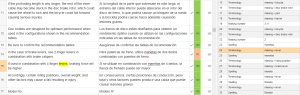

- Check your translation against the source for any missing text or formatting issues. Most CAT tools include QA features as the standard within their software. Each tool offers different features, but they all are good at detecting untranslated segments, source same as target, and even missing or wrong numbers. If your CAT tool only offers basic checking procedures, or you want to run more in-depth checks, we recommend using XBench. You can even load translation memories and check their consistency, formatting, coherence across files, missing translations and “suspect translations” where different source segments have generated the same translation (perhaps an error accepting a translation memory match), or vice versa, when a single source fragment has generated multiple translations. Your clients will certainly appreciate this.

- Do not be literal. Translation buyers and readers never appreciate translations that sound “corseted”, a word-for-word carbon copy of a foreign language. It is not acceptable unless you are translating technical material, as expressions and idioms seldom translate literally from one language to another. Technical material may include pharma translations, engineering, translations for the automotive sector, medical translations, software translation, patents, etc. Accuracy and precision take priority over style in legal translations. Many examples and references may seem very relevant and clear to the original writer, but not to the target audience. Some years ago, the British Prime Minister put Japanese translators on freeze mode when he announced, upon a visit to Japan, that he was prepared to go “The Full Monty” on his economic policies. The film had not been released in Japan. Website translations, any type of books and literature, news clips, CVs, etc. all require a beauty of expression and flow that only come with a “neutral approach to translation.” You have to distance yourself from your work, edit and proof it from a critical point of view. You should always look at your translation as if it were the final product. You offer a professional translation service, and each one of your clients is unique. Do not count on editors or proofreaders to fix your unchecked work and your mistakes. Nobody likes to correct other people’s lack of attention.

- Be sure to run your spellchecker again. It will take a couple of minutes if everything is fine. A small typo may have been added during your revision stage, and it would destroy all of the quality steps you have carried out until now.

- Remember to include any notes or comments for your client, or for the editors, about your translation in your delivery file. A blank delivery with your signature, or a “please find files attached” shows little interaction with your client. It may be a sign that, if you do not have time to write two lines about the delivery of the project, you probably did not have time to do a quality check at all. Thank the Translation Project Manager for the job and say you look forward to the next one. If there are simply no issues to raise, say that the job went smoothly. Perhaps the translation memory was very good or that you felt very comfortable and enjoyed doing the translation in your field of expertise.

[caption id="attachment_1794" align="alignright" width="300"]

Quality Assurance in Memsource[/caption]

If you found some problems and issues, these are better listed in a separate document and attached to the delivery. Tell your Translation Project Manager to refer to them. There may have been a reason why you chose a particular translation or term, or why you had to deviate from standard terminology or the glossary in your language. However, if you do not warn in advance, editors will assume that there is an error and this will lead to wasting time on both sides. The editors and checkers will start to find unexpected translations or terms and, without warning, a series of translation queries will follow.

2 min read

3 min read

.png)